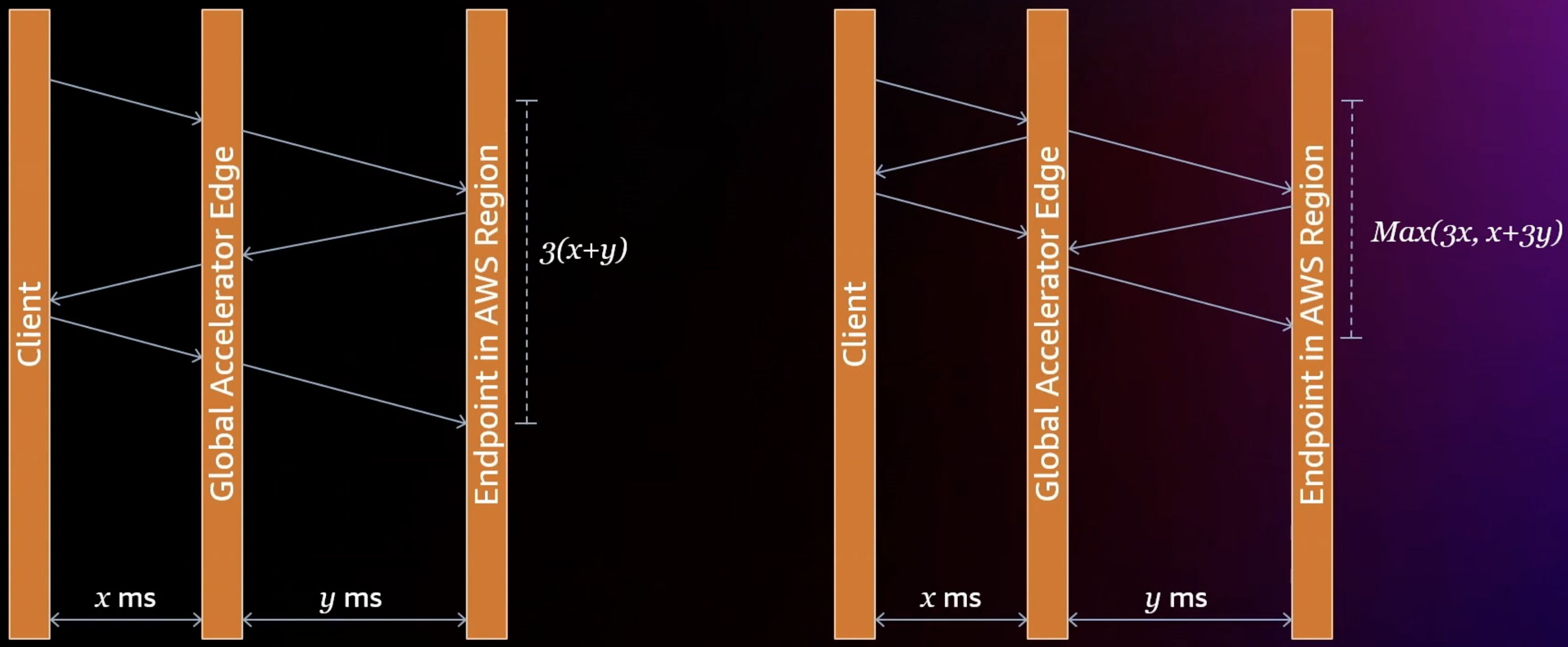

TCP termination at the edge

TCP termination at the edge improves latency:

GA also optimizes throughput for the long fat network between edge and target region:

- Tune TCP parameters for high BDP

- Use high

initcwndto cheat TCP slow start - Jumbo frames

- Large receive window size, TCP buffers tuning

- Large congestion window (congestion algorithm?)

Jumbo frames

Since Mar 2025, EC2 supports jumbo frames up to 8500 Bytes for cross region VPC peering. AWS Global Accelerator could be using a higher MTU at ~9000 bytes.

Cellular architecture and shuffle sharding

Each accelerator is assigned two network zones in each PoP, each with a (or two with IPv6) static IP address. Each zone is further sharded to 4 cells to apply shuffle sharding.

Anycast

Routes for the static IP addresses are advertised from multiple edge PoP locations. When GA’s EC2 instances detect loss of backbone connectivity, the routes are automatically withdrawn.

Each PoP location has 4 BGP speakers placed in 4 different AWS regions for redundancy.